In films from the science fiction genre, we have witnessed how digital assistants manage every aspect of people's lives—from booking flight tickets, arranging grocery purchases, scheduling appointments, etc., without any user effort involved. In 2026, we will have a greater chance of seeing this move toward reality. Many companies such as Apple and many semiconductor manufacturers including Qualcomm are developing "agentic AI"—these are original creations that can do things for you rather than just speak to you.

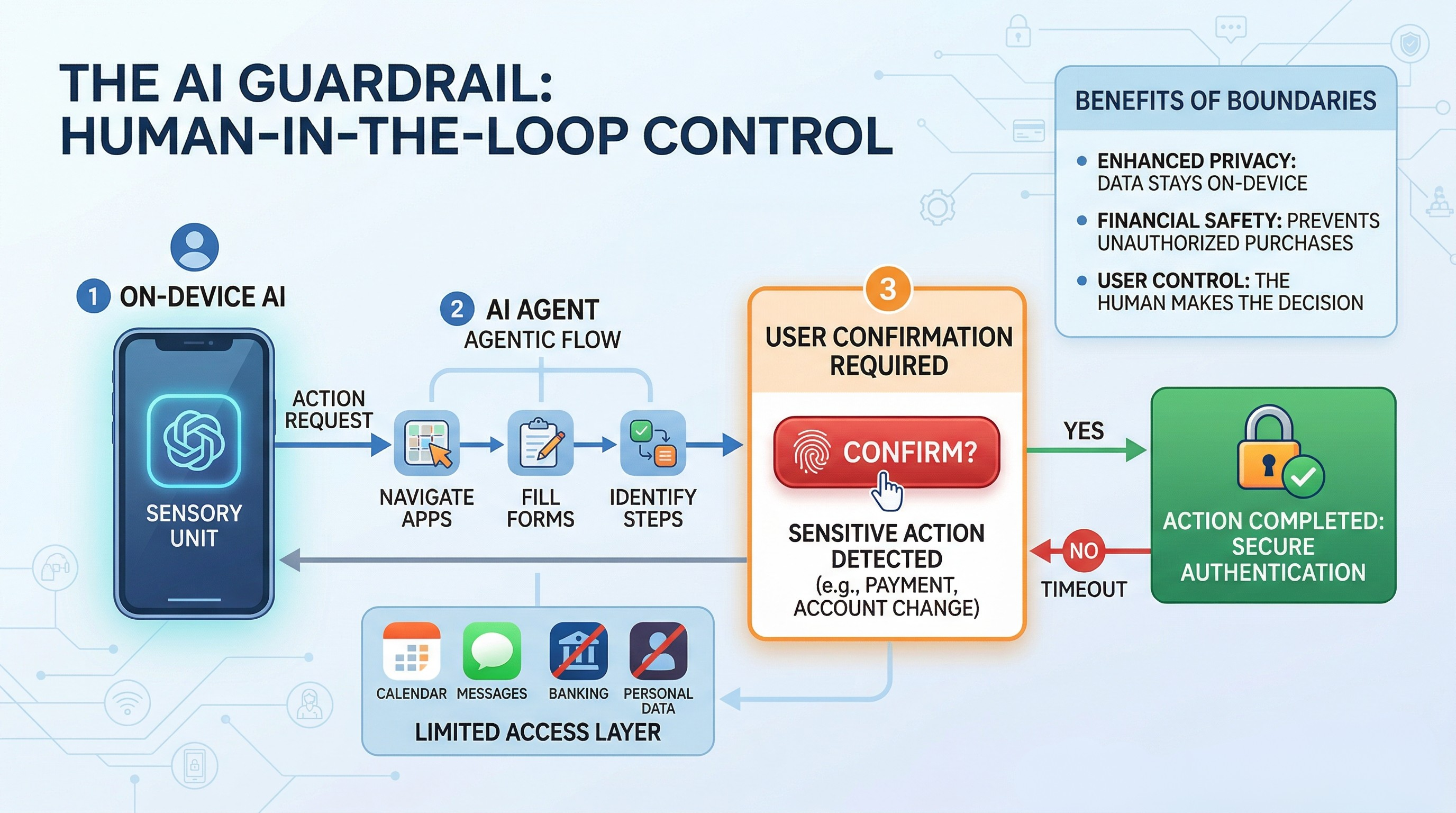

But there is a twist to this scenario—and it is a positive one! Apparently many new highly-advanced digital assistants will include "invisible leashes" by which the technology manufacturers will place enforced limitations on how much power they are giving to these AIs. Current plans call for providing an AI with certain parts of an individual's work and daily activities while restricting access to sensitive personal information.

What Is an AI agent?

Before getting into the limitations of Agentic AIs let us discuss the power of said AI in more detail. Conventional forms of AI such as Apple's Siri and Google's Assistant only assist with common household tasks like providing answers to many types of questions or simply enabling the user to set/remind them via a timed event. Conversely, when paired with Agentic AI, they will be enabled to perform all of those same activities just like a human being would — but with programming that allows the It to interact with other applications.

Non-Tech Viewpoint on How AI Will Affect Transparency in Your Service Provider's Overall Efforts/Performance Trend It was recently reported (by Tom's Guide) that testing on alpha and beta versions of these agents showed that they are very adept at what they are designed to do. One such example is the way an AI agent was able to complete an intricate application process for making travel reservations on a mobile device.

Specifically, the agent had effectively filled out all required forms and navigated through several screens until it arrived at the payment screen.

However, the agent did not actually click "Pay" until the user pressed a button to confirm that payment. This is referred to in the industry as a "human in the loop" model, meaning that while the AI agent did all the "heavy lifting" (such as searching for travel options, completing the forms, and navigating through various screens), it left the final decision (which is extremely sensitive) up to the user. This intentional pause is built into the system to prevent it from performing actions that were never intended and/or preventing the user from making a costly mistake.

The Human In the Loop(HITL) Model

Recent tests, including those reported in Tom’s Guide, show that these agents can perform very well. In one experiment conducted by myself when beta testing an agent, the agent was able to successfully navigate through a complex application workflow, make a reservation on an unavailable apartment, and click to pay using the final payment screen.

However, just before clicking on ‘Pay’, the agent stopped.

The agent stopped waiting for the user to press a button to confirm the payment. The industry refers to this as a Human-in-the-Loop (HITL) model. The AI (HITL) does the hard work (searching, filling out forms, and navigating) however, the AI does not perform the most sensitive action (making a payment) without receiving an explicit request from a human. The delay was made as a design choice so the system doesn't accidentally perform an action that you did not expressly request or make an arbitrary error.

TheImportance of Approval Checkpoints

When we complete banking transactions through an app, we have a security process that allows us to confirm our identity, such as entering a fingerprint, entering a security code from text message, or answering a question to verify our identity. Tech companies are now implementing the same type of “checkpoints” for every component of the smartphone ecosystem. So far, the following types of transactions have been identified as requiring approval checkpoints:

1) Financial transactions (any purchase of a product or subscription to a service or any transfer of funds)

2) Account changes (changing passwords, deleting information, or changing security settings)

3) Data sharing (transferring private data from one app to another).

Through building these “approval checkpoints” into their platforms, Apple and Qualcomm hope to manage the inherent risks of artificial intelligence (AI). For example, if an artificial intelligence misunderstands the intent of your request for something such as “buying a flight to Paris” (say, maybe you are curious about how much it costs to fly there, as opposed to wanting to buy the ticket right now), then this approval checkpoint can serve as a means to help prevent financial loss in the event the AI made an incorrect determination (e.g., completing the flight purchase).

Establishing Limits on AI Data Usage

Another method of regulating AI is establishing limits on its access to the user's data. While it might seem like AI is going to be more efficient (i.e., be more effective) if it has access to everything on the user's device, that would create a huge risk for personal privacy.

Therefore, businesses are placing restrictions on what type of data the AI will have access to. For example, an individual might allow the AI access to their Calendar and email accounts but deny it access to their health information or private notes. The digital services that individuals use will restrict the free flow of data through the services unless the user allows it.

Security: Processing on the Device

Another significant issue around AI use is where the data resides and if/when it is sent to third parties. For example, if the user has the AI manage the user's taxes, they may not want their tax information uploaded to a third-party’s cloud server.

This is where hardware comes into play. Advances in computational power on-chip, like Qualcomm’s 5th generation Snapdragon integrated processor family, or Apple’s A-series chips, are allowing for fast AI processing on-device without uploading the data or sending the data outside of the user’s device. This means that the sensitive information contained in the user's electronic devices is not sent off the user’s device.

Collaborating with Partners

The AI (Artificial Intelligence) assistant does not function alone, rather it must communicate with a hotel reservation system in order to reserve a room. Security is crucial for AIs while communicating to maintain this automation and security with integrations made by AI developers with their banking (credit card) partners.

Banks have strict security rules to adhere to, which provide another level of oversight. Such as a credit card provider may require extra identification before approving transactions over $50 made through an AI. Thus these safety measures, while still being built out, represent a collective effort by all parties involved in the creation of a secure "AI economy" for consumers.

Transition from Enterprise to Consumer

For years, the conversation around AIs and their governance (rules) centered on enterprise-level concerns; how to protect a company's secrets and/or prevent security breaches as a result of large-scale automation.

Today, the concern over AIs and their governance is shifting as AIs are entering the consumer marketplace (iPhones/Android devices), which means the path to governance for AIs must provide a user-friendly experience. If security measures required of the user become too cumbersome, then the user will likely disable the measures. If controls are too lenient, then a user will be scammed out of their money. Apple and Qualcomm are working together to ensure that all AIs provide clear, fast, and easy-to-use security measures.

Boundaries in Autonomous Systems: The Future

Boundaries in Autonomous Systems: The Future

As AI systems become more capable of completing tasks independently (automated), the need for independent operation to complete these tasks also grows. A simple programming mistake in a computer's natural language processing (NLP) enabled chatbot to make a silly mistake when writing a poem. Conversely, if there were an error made in AI, a virtual assistant would create a costly mistake in making a flight reservation.

We are now in a time of transition towards controlled environments instead of full automation ("full AI independence"). We are transitioning from the idea of the telephone as something you live your life through (full AI independence), to a concept that combines the human (manager/pilot) and the computer (assistant/worker). As an example, the AI will work tirelessly, but the human will provide the permission to execute the task(s) by saying the final “okay” before moving forward.

For those who are very technical, the transition to an Assistant/Manager (AI and human partnership) may seem a limitation on their desire for total automation; however, we believe this is the best way to proceed. This method also helps ensure that we continue to have driving control (authority) over our devices, regardless of the extent in which our devices become intelligent.

Next time you hear about some new feature of an AI-like technology that is being added or enhanced for your smartphone or computer, remember to not only consider what the function or task could actually do, but also consider what is possible to accomplish without your consent or authority. The limitations or boundaries of these functions/tasks are not defects; they are one of the key components of the overall system. These limitations will allow us to continue to trust the use of AI technology in our pockets every day.

.svg)

.svg)

For Instructor

For Instructor